Why traditional analytics is no longer enough

Most enterprises today are not short of data — they are short of time. By the time a dashboard refreshes, a report circulates, or an analyst responds to a query, the moment to act has often passed. Business conditions shift in hours, not quarters, yet most analytics infrastructure was built to answer yesterday’s questions. That gap between when data is available and when insight translates into action is where competitive advantage is lost.

Conventional analytics wasn’t built for this speed, and its limitations are clear:

- Reactive insights: Dashboards and reports mostly explain past performance rather than guiding decisions in real time.

- High manual effort: Analysts often have to spend time gathering and validating data before offering insights.

- Slow analytical cycles: Many pipelines use planned batch processing, which makes it more challenging to respond swiftly to emerging trends.

- Rigid pipeline design: Fixed reporting logic and transformations are the foundation of traditional data engineering processes.

- Limited adaptability: As new business questions arise, pipelines and dashboards often need to be updated.

To close the gap, analytics is progressively shifting toward agentic AI for data analytics. By using this approach, intelligent agents operate on top of the existing data engineering infrastructure, dynamically accessing datasets, identifying trends, and supporting decision-making when conditions change.

Conventional analytics wasn’t built for this speed, and its limitations are clear. Platforms like Google Cloud Vertex AI are now enabling a different approach — one where intelligent agents work on top of existing infrastructure rather than waiting to be queried.

Introducing Vertex AI Agent Development Kit (ADK)

The Vertex AI Agent Development Kit (ADK), which is based on Google Cloud Vertex AI, provides a framework for creating agents that can understand goals, plan tasks, retain context, and carry out actions in business environments.

Agents created using ADK concentrate on accomplishing a business goal rather than adhering to strict regulations. After studying the request and assessing important context, such as past trends or operational limitations, the system creates a plan to achieve the intended result.

In practice, this plays out in a few coordinated steps:

- Goal interpretation: The agent understands the business objective instead of following predefined instructions.

- Planning and reasoning: Complex objectives are broken down into more manageable tasks, such as gathering data, validating conditions, analyzing data, and making recommendations.

- Action execution: The agent interacts with enterprise systems to obtain data, initiate job processing, and deliver structured insights.

- Persistent memory: By keeping track of previous interactions and outcomes, the agent can learn from experience and avoid repeating ineffective actions.

Once these agents are designed, Vertex AI Agent Engine provides the runtime environment required to run them reliably at scale. The platform ensures that errors are identified and corrected without interfering with business operations, divides the workload among multiple agents, and controls execution.

How Agentic AI Works with Google Cloud Data and Analytics Platforms

Agentic intelligence builds on existing data platforms. In this model, agents decide how and when to use the data in real time. Streaming pipelines and batch operations continue to supply updated datasets, but instead of waiting for human questions, the agent incorporates them directly into its reasoning loop.

The agent retrieves relevant data from analytical storage and makes the necessary modifications when a business goal is initiated. It may examine historical patterns, compare them with current signals, and evaluate how those patterns relate to the stated goal. As new information becomes available, this analysis often happens on its own. Insights are produced in response to shifting circumstances rather than depending on humans to run queries or update dashboards on a regular basis.

Data engineering followed a predetermined pattern: dashboards answered expected queries, pipelines transferred data from source systems into warehouses, and transformation logic was hard-coded. When something new came up, it usually meant building new reports, rewriting queries, or reworking pipelines. It also relied heavily on manual coordination between business teams, analysts, and data engineers.

Intelligence is added on top of the same foundation using an agentic approach driven by Vertex AI ADK. Agents interpret the objective and dynamically determine which datasets to access, what transformations to apply, and how to sequence actions rather than rebuilding pipelines for each new question. This makes the data architecture more flexible. Pipelines still prepare trusted data, but agents determine how to use it in context, reducing the need for repeated workflow to redesign.

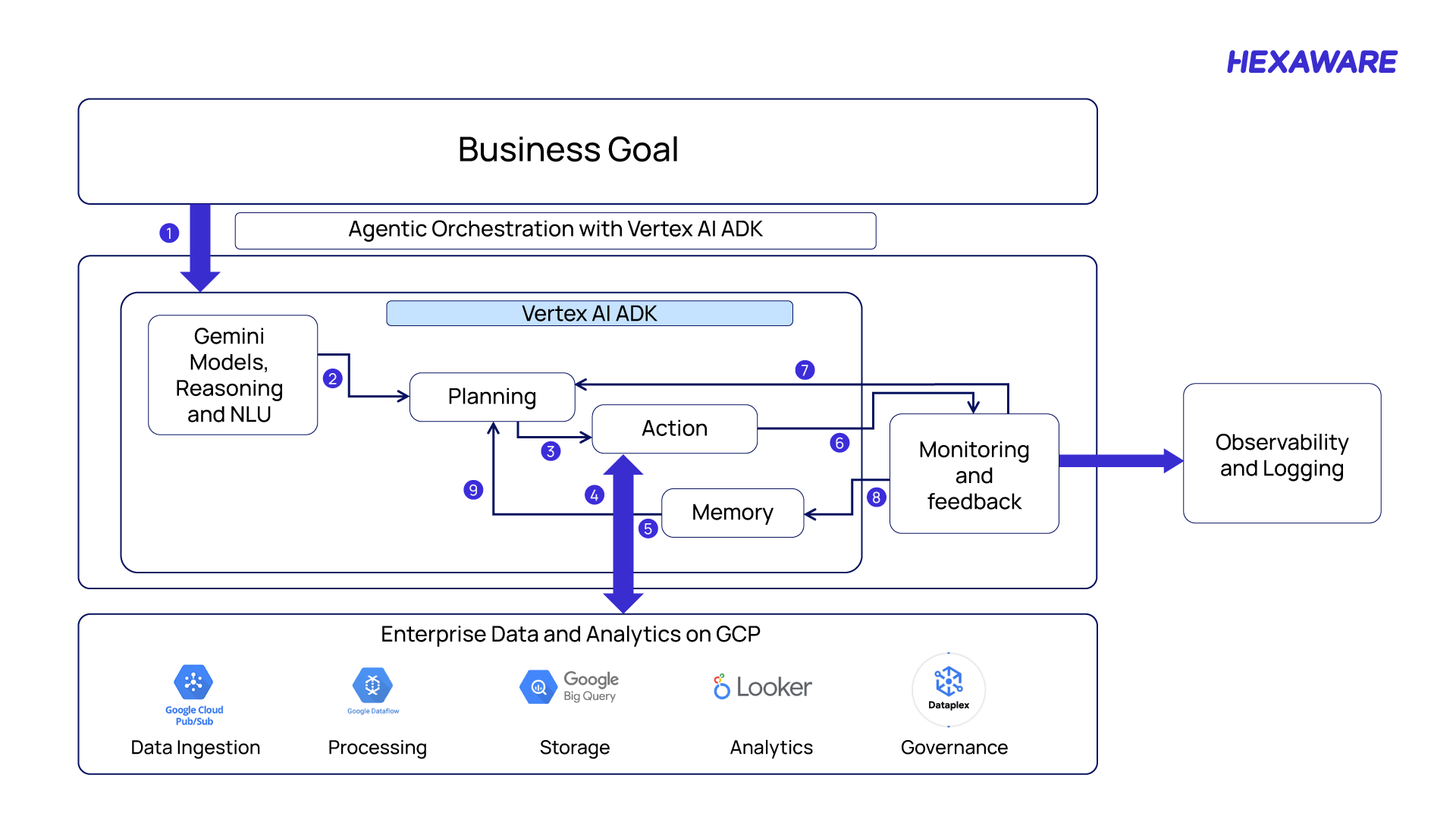

Reference Architecture to Go from Business Goal to Autonomous Insight

Reference architecture for agentic data orchestration using Vertex AI ADK

The architecture traces the journey from intent to execution, with the agent interpreting, planning, and acting along the way.

1. Business Goal Definition

The workflow begins with a clearly defined business objective. The goal focuses on the outcome rather than technical implementation. Examples include:

- Matching job descriptions to suitable consultants

- Identifying operational bottlenecks

- Prioritizing high-impact opportunities

2. Intent Understanding using Gemini Models

The request is interpreted by the Gemini reasoning layer. The system extracts:

- Key entities

- Constraints

- Contextual signals

The objective is converted into structured intent before execution begins.\

3. Planning within Vertex AI ADK

The Planning component in Vertex AI Agent Development Kit (ADK) breaks the objective into smaller tasks. It determines:

- What actions must be performed

- Which enterprise tools or datasets are required

The result is a dynamic execution plan instead of a fixed workflow.

4. Action Execution

The Action layer begins executing the plan created by the planning stage. It coordinates with enterprise data platforms and analytical services.

5. Interaction with Enterprise Data and Analytics Platforms

The agent securely interacts with enterprise data services on Google Cloud, including:

- Pub/Sub – Data ingestion

- Dataflow – Data processing and transformation

- BigQuery – Analytical storage and querying

- Looker – Business intelligence and visualization

- Dataplex – Data governance and management

6. Monitoring of Execution Outcomes

Once actions are completed, results are evaluated by the monitoring layer. The system checks whether the goal was achieved and whether further refinement is required.

7. Observability and Logging

All activities are recorded through observability and logging systems. This provides traceability, performance monitoring, operational transparency, and supports enterprise governance and debugging.

8. Memory Storage

Key outcomes and signals are stored in the agent’s memory layer. This allows the system to retain:

- Previous actions

- Contextual insights

- Execution results

9. Continuous Learning and Re-planning

Stored memory and monitoring feedback are sent back to the planning component. The agent updates its strategy based on previous outcomes. This creates a continuous learning loop, enabling the system to improve over time.

Why Agentic AI with Vertex AI Is Critical in 2026

In 2026, insights are required during events rather than after they occur. Businesses are moving toward autonomous and agent-driven analytics because of this shift, where intelligent systems assist with real-time signal interpretation and decision guidance. Google Cloud Vertex AI makes this shift possible, with Pub/Sub, Dataflow, and BigQuery forming the data layer and Vertex AI ADK providing the reasoning layer across all of them.

Analytics progresses from static reporting to continuous decision assistance when these layers work together. Without waiting for manual inquiries, agents can evaluate signals, retrieve pertinent datasets, interpret objectives, and start responses.

For instance, Hexaware partnered with a global insurance provider to reduce their operational costs by 30% through GenAI-powered automated code conversion agents, transforming legacy SAS scripts into efficient PySpark workloads.

Another European insurer achieved full visibility into a complex, undocumented SAS landscape using Hexaware’s AI-led code intelligence platform, accelerating migration timelines and reducing dependency on scarce subject matter experts.

For a retail client, a fine-tuned GCP PaLM2-based GenAI solution increased product listing conversions by 20% while slashing manual content creation effort by 75%, significantly improving product discoverability.

Build Agentic AI-driven Data and Analytics at Scale with Hexaware and Google Cloud

Translating business goals into autonomous analytics requires more than platform access — it requires implementation experience. Hexaware is doing this work in production, enabling enterprises across industries to harness the power of agentic AI on Google Cloud.

By leveraging Google Cloud’s Vertex AI Agent Development Kit (ADK) alongside Hexaware’s proprietary AI frameworks, accelerators, and best practices, Hexaware delivers scalable, secure, and governed agentic AI solutions tailored to each customer’s unique environment. This approach seamlessly integrates advanced AI reasoning with existing data pipelines and analytics platforms, ensuring enterprises maximize the value of their cloud investments without disruption.

For enterprises ready to move beyond dashboards, Hexaware and Google Cloud’s partnership offers a clear path from first deployment to enterprise-scale autonomous analytics.